Serverless Integration for Large Language Model (LLM) using AWS Lambda

Table of Contents

About

In this blog, we’ll use a Large Language Model (LLM) to summarize a web page and store the summary in an Amazon DynamoDB table. The web page’s URL serves as both the input source and the key for storing the summary in the table.

The above is the latest code. Please use source code link provided in each implementation step for consistency with the blog content.)

Prerequisite

- A computer. If using Windows please check it’s Windows 11 and recent versions of Windows 10 (version 1903 or higher, with build 18362 or higher).

- An AWS account. You can create a free account if don’t have one yet.

- Install Docker Desktop by clicking the Download Docker Desktop.

- Install Visual Studio Code, and DevContainer extension.

- Install Git.

- Clone this repo and open it in Visual Studio Code. (Choose “Reopen in container” when being asked)

- Install AWS CLI and CDK by running the

./script/install_tools.shin Visual Studio Code’s Integrated Terminal.

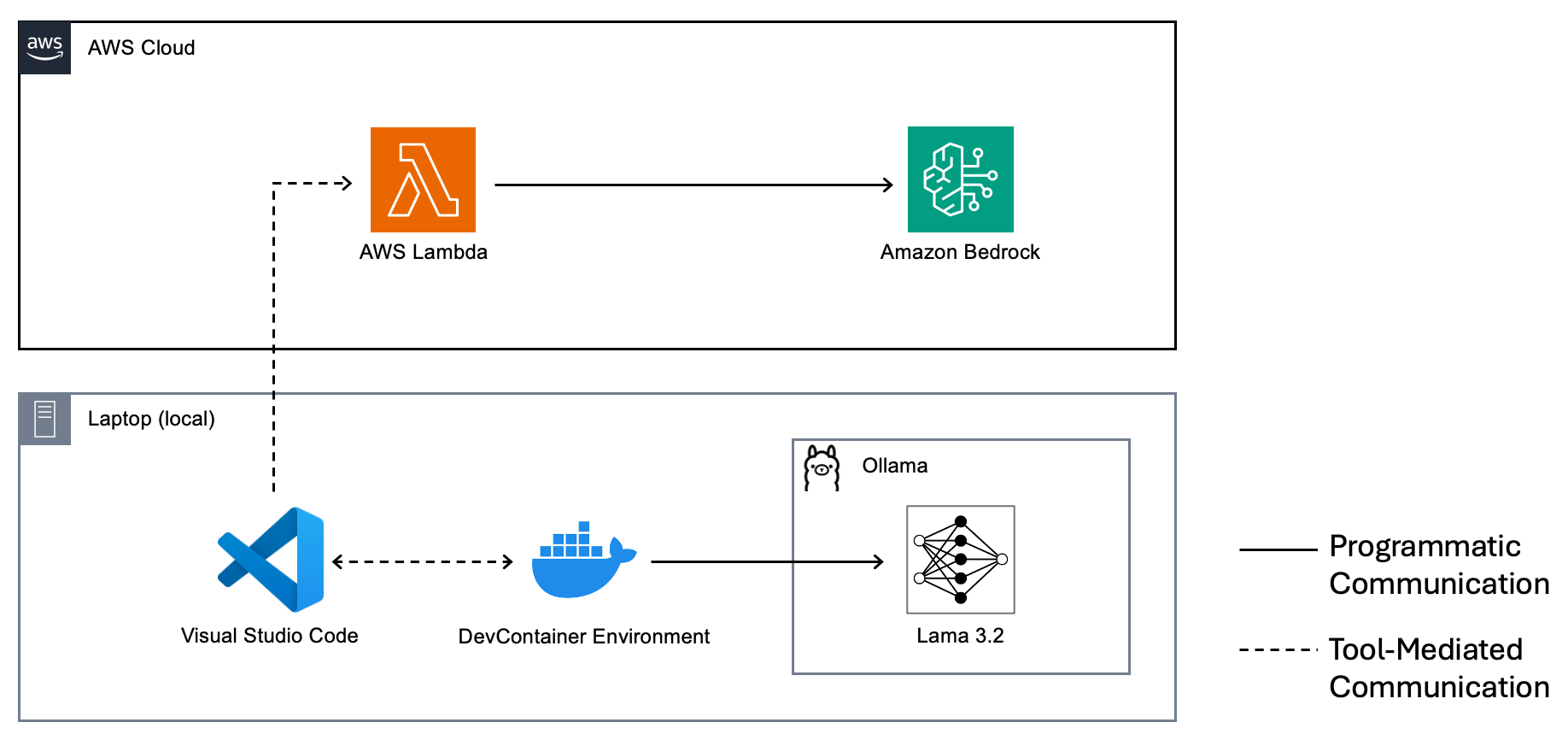

Architecture

References

- Udemy - LLM Engineering: Master AI, Large Language Models & Agents Created by Ligency Team, Ed Donner

- GitHub - llm_engineering by Ed Donner

- AWS - Track, allocate, and manage your generative AI cost and usage with Amazon Bedrock by Kyle Blocksom and Dhawalkumar Patel on 01 NOV 2024

Step by step implementation

1 - Hello World from Ollama

·1257 words·6 mins

This guide walks you through setting up a local AI development environment using Ollama for running large language models and DevContainers in Visual Studio Code for a consistent, isolated setup. It covers installation, configuration, and making REST API calls to Ollama, with specific instructions for Windows users using WSL 2.

2 - Hello World from Amazon Bedrock

·1085 words·6 mins

This guide details how to use Amazon Bedrock and AWS Lambda to deploy and invoke the Llama 3.2 1B model in a serverless environment. It covers enabling model access, creating a Python-based Lambda function, configuring IAM permissions, and testing the setup, with step-by-step instructions for seamless AI integration.

3 - Fetch HTML with an URL

·2418 words·12 mins

This guide explores how to use BeautifulSoup to extract plain text from URLs and build a reusable web scraper for integration into AI-driven applications like AWS Lambda. It includes step-by-step instructions for creating and testing a Python-based scraper with a modular class design.

4 - Summarize Web Content using Ollama

·775 words·4 mins

This guide shows how to create a modular Python application for web scraping and AI summarization using BeautifulSoup and Ollama. It covers organizing scraper and chat services into a reusable module, integrating them in a main script, and generating summaries from online content.

5 - Summarize Web Content using Amazon Bedrock

·1354 words·7 mins

This article guides you through building a modular Python application to leverage Large Language Models (LLMs) with AWS Lambda. Learn to set up AWS CLI with IAM Identity Center, create a Bedrock Chat Service, implement web scraping and summarization, and automate Lambda deployment with a ZIP file. Includes practical code examples and verification steps.

6 - A Small Step Towards Production Readiness

·1230 words·6 mins

This post guides us through improving Python code quality using Ruff, a fast linter and formatter, and pre-commit for automated checks. It also covers structuring LLM prompts with a Prompt model for scalable AI integrations, including updates to Ollama and Bedrock chat services.